Trusted by startups and teams shipping LLM products

Here’s what customers say about building with Boson.

NEW

Features

Explore the core parts of Boson — rotate through the carousel to see what teams use every day.

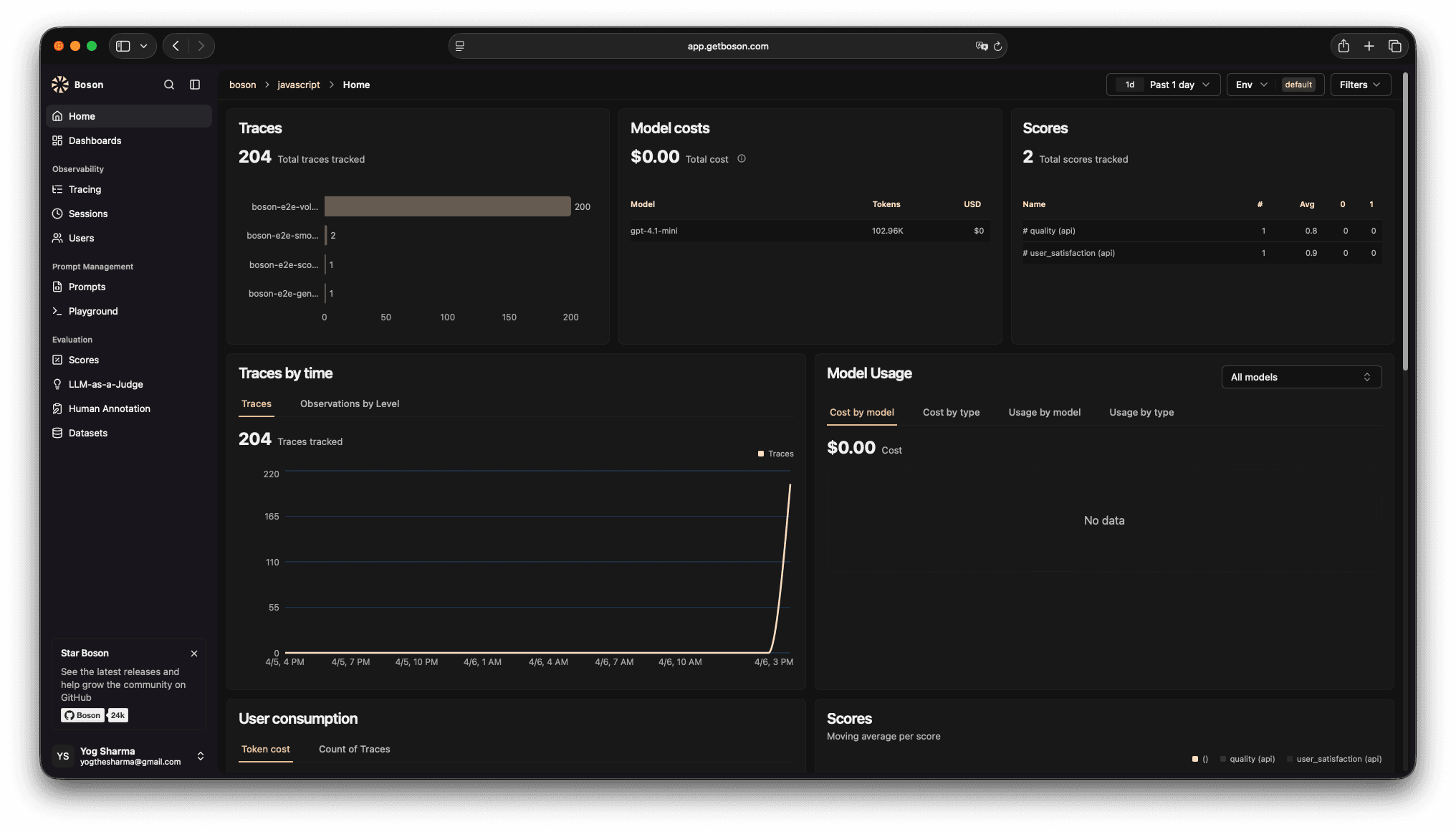

See every LLM call end-to-end with timings, inputs/outputs, and rich context to debug faster.

Works across providers, frameworks, and workflows—so you can instrument once and iterate faster.

A simple workflow: add instrumentation, debug with traces, and validate changes with evals.

Start with one endpoint or one workflow. Capture prompts, responses, latency, token usage, and custom metadata.

// Install: npm i @getboson/sdk

import { observe } from "@getboson/sdk";

export const answer = observe(async ({ question }) => {

// call your model/provider here

return "…";

});Shipping LLM features is messy. Boson gives you a single workflow for tracing, debugging, and evaluation—without building an internal platform first.

Here’s what customers say about building with Boson.

“Boson made it obvious where our latency and quality issues were. We shipped improvements in days, not weeks.”

AI platform team

“The eval workflow finally gave us a repeatable way to measure changes.”

LLM team

“The tracing UI is clean and fast — it’s now part of our daily workflow.”

B2B SaaS

“We reduced regression risk by running evals before every release.”

Platform

“Support is excellent — quick responses and great product direction.”

AI product

“Boson became the source of truth for prompts, runs, and quality.”

AI startup

“We finally have consistent instrumentation and a dashboard we can trust across teams.”

Enterprise AI

Book a demo or start integrating in minutes. Boson helps your team debug faster, measure quality, and iterate with confidence.